Most organizations treat their AI adoption strategy as a technology problem.

Here’s the pattern that keeps surfacing: the tools are rarely the hard part. The hard part is what happens to the humans in the middle of it.

The gap that doesn’t close on its own

Start here, because everything else depends on it.

Leadership is operating from one set of assumptions about AI. Employees are navigating a different reality. The C-suite sees competitive advantage, investment momentum, and strategic opportunity. Employees often see something being done to them: tools selected without their input, roles being quietly reframed, and an expectation to adapt on a timeline they had no hand in setting.

That gap doesn’t close through communication campaigns or training programs. It closes through how organizations actually structure the experience of AI adoption for the people doing the work. The expectation gap between leadership and workforce is bigger than most organizations want to admit, and it’s not primarily a technology problem. It’s a human one.

The natural instinct is to jump to co-creation as the answer. Bring employees in. Build with them, not at them. That instinct is right. But it skips a step.

The step that unlocks everything else

Most organizations treat governance as the thing that slows adoption down. A compliance checkbox. A legal requirement. Something to get through before the real work begins. Or something to skip entirely.

That framing gets it exactly backwards.

Governance isn’t the thing that slows you down. It’s the thing that gives people permission to move. When people know what’s allowed, they’re free to try things within that boundary. When they don’t know, they don’t stop using AI. They use it quietly, without support, outside of any organizational visibility. Shadow AI isn’t a future risk, it’s a current reality at most organizations. The question isn’t whether it’s happening. It’s whether it’s happening with organizational support or without it.

But governance without access is just policy on paper. Organizations can’t ask people to experiment with AI and then not give them the tools to do it. Yet that’s exactly what happens, employees are encouraged to explore, but licenses are limited and individuals are left to figure it out on their own. When organizations do invest in access, it should be structured for shared use, not siloed by individual. Shared team environments where organizational knowledge gets built in. Where the prompts that work get documented. Where custom instructions, shared context, and institutional memory live in one place and accumulate over time.

Governance, done well, creates the conditions for experimentation. It makes it safe, structurally, not just rhetorically, for people to try things, push boundaries, and build the kind of collective fluency that drives real innovation. That safety, paired with real access, is not a soft outcome. It’s the prerequisite for everything that follows.

What becomes possible when people feel safe to build

Here’s what that safety actually unlocks: the ability to build together, not just alongside each other.

The organizations pulling ahead in AI aren’t the ones who moved fastest. They’re the ones who moved with clarity and brought people in as genuine co-creators from the start.

Velocity without shared direction creates rework. Co-creation with a clear framework creates momentum. Those are not the same thing, and the difference shows up months later when adoption either sustains or quietly stalls.

What governance and real access make possible is a shift in how people relate to the work itself. When someone has been given real permission to experiment, the tools to act on that permission, and the support to build with others, they stop experiencing the transformation as something being done to them. They start experiencing it as something they’re part of building. That shift, from subject to author, is what actually closes the leadership-workforce gap.

The gap isn’t primarily an information problem. Leaders and employees are often reading the same headlines and sitting in the same all-hands. What separates them is that one group feels ownership over the direction and the other doesn’t. Co-creation, supported by governance that makes experimentation genuinely safe and resourced, is how you transfer that ownership. It’s not a cultural initiative. It’s the mechanism by which AI adoption sustains beyond the pilot phase.

Governance is the bridge, not just the foundation

Here’s the reframe worth sitting with: good governance isn’t just a foundation for co-creation. It’s also part of the bridge that closes the leadership-workforce gap.

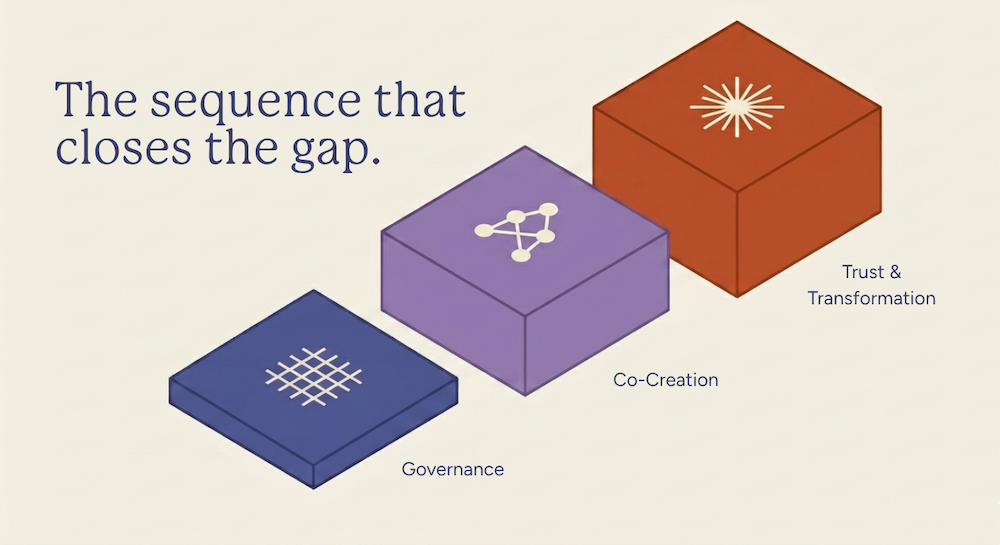

Most AI strategy conversations treat alignment, co-creation, and governance as separate workstreams. They’re not. They’re a sequence.

Governance creates the conditions to experiment. Safety enables genuine co-creation. Co-creation builds trust and connection. Trust and connection produce a workforce that’s invested, not just instructed. And an invested workforce is what finally puts leadership and employees in the same conversation about AI.

Each step depends on the one before it. Strip out governance and you get co-creation theater: invitations to participate that no one trusts, because the conditions for real experimentation don’t exist.

Skip co-creation and you get a workforce that’s technically informed but emotionally disengaged from the transformation.

Without that engagement, the gap between C-suite ambition and employee experience keeps widening, regardless of how much you invest.

Show, don’t tell

The clearest proof of this sequence working isn’t a framework or a research report. It’s a demo.

When a team builds something with real organizational support, with governance that said “try it” and the tools to actually do it, with co-creation that brought the right people into the room, what they produce is tangible in a way that slides never are. And when they show it, people don’t just leave impressed. They leave ready to build.

That’s what becomes possible when the conditions are right. The demo is the evidence of governance and co-creation working together. Show, don’t tell isn’t just a presentation tactic. It’s what trust looks like when it’s made visible.

Where to start

If you’re leading an AI adoption strategy, or watching one unfold around you, the question worth sitting with isn’t “are we moving fast enough?” It’s “have we created the conditions for our people to move with us?”

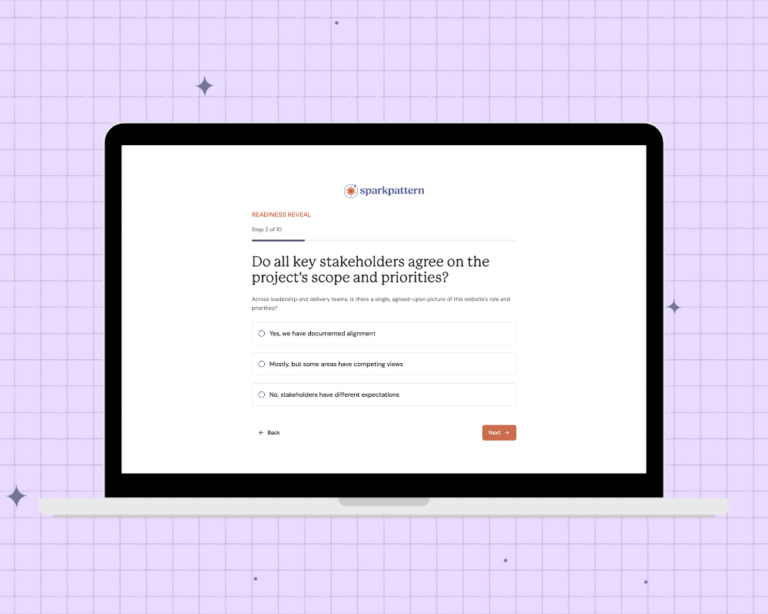

That means looking honestly at whether your governance approach is creating permission and real access, or just protection. Whether your co-creation efforts are structural or performative. Whether employees feel like authors of this change or recipients of it.

The organizations that will get the most from AI are not the ones with the most advanced tools. They’re the ones where leadership and employees are finally in the same conversation, because someone took the time to build the bridge.

Governance first. Co-creation follows. Trust compounds.

That’s the sequence. And it starts with being honest about which step you’re actually on.